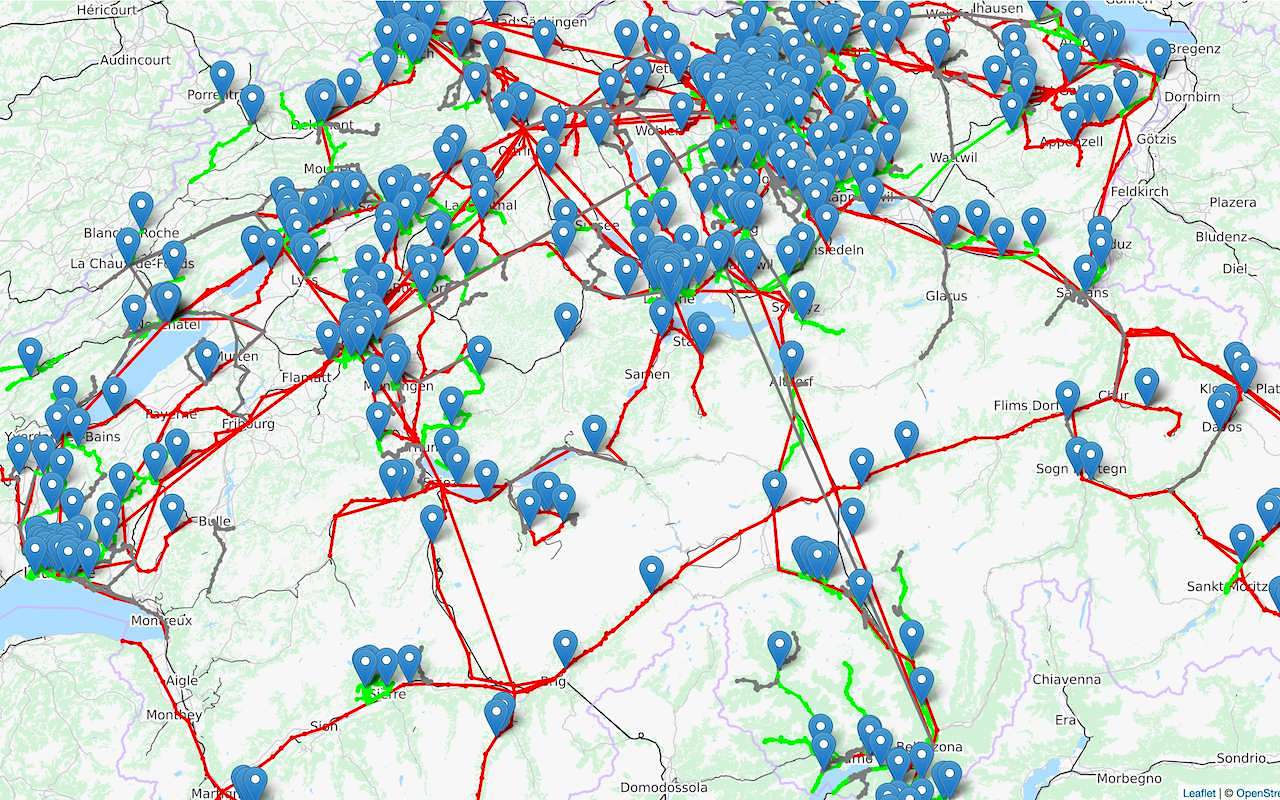

In my talk Introduction to Data Streaming, I demo an application that displays the location of all public transports in Switzerland in near real-time. Here’s a sample recording:

The demo is entirely based on Open principles: the code and its dependencies are Open Source, and the data is read from an Open Data endpoint. In order to develop the demo, I had to overcome some issues by leveraging Open Data. In this post, I’d like share those issues, and ease the path to fellow developers.

What is Open Data?

Before discussing issues of Open Data, we first need to describe what it is.

Open data is the idea that some data should be freely available to everyone to use and republish as they wish, without restrictions from copyright, patents or other mechanisms of control.

In that sense, Open Data is the logical step after Open Source: once software has been distributed freely, it makes sense to do likewise with what it processes. For a more in-depth discussion on Open-Source, please check the post from last week.

Open Source takes its roots in individuals who started to create free software in their own time. On the other end of the spectrum, data is created by organizations, and thus belong to them. Most private companies either believe that their data has too much value to be freely distributed, or don’t want to spend money to make them freely available.

For this reason, the Open Data movement is mostly a public initiative, as administrations believe the private sector can then leverage data to bring value to the society.

In Europe, Open Data is encouraged at two levels:

- The European Union

- Individual states, e.g.:

I’ve no experience with other locations, but I recently stumbled upon the Californian Open Data initiative. Perhaps there’s such an initiative where you live?

Challenges

Now that we have made clear what is Open Data, let’s see what challenges one has to deal with when using it.

Access

The first challenge might be the most unsettling one. As developers, we live in a world of web services. Thus, we are led to believe the first step in using Open Data is to read the endpoint documentation. Unfortunately, most data, open or not, are not accessible in such a dynamic way. The sad truth is that the only way to access the vast majority of Open Data is by… downloading a file.

To address that challenge, one needs to:

- Check at what frequency the file is refreshed. The polling approach makes that first step fundamental.

- Set up a batch job that regularly downloads the file and process it somehow

Because a file is just a file, some implicit contracts are void. For example:

- the filename might change. For example, it might contain an increasing counter. Or it might contain the date and/or the time. In all cases, let’s hope the change can be easily inferred.

- the file’s structure might change. Think about an Excel File that may add/remove columns.

- the file format might also change, from PDF to Excel

- etc.

One needs to be aware of those changes, because it most cases, they cannot be handled automatically.

Format

One might assume that Open Data implies Open Format. Nothing could be further from the truth: Microsoft Excel, PDF, etc. It might even be compressed in ZIP format.

Of course, sometimes, one is fortunate enough to actually have a true Open Format. It might be CSV, JSON, or - <shudder> - XML.

Whatever the format, your tech stack needs to be able to decode it, whether directly or through a library.

Standard

Some formats allow to follow some specific structure. For example, XML offers a dedicated grammar via a XML schema. It makes the structure explicit, but JSON and CSV files are also structured: the difference is that their structure is implicit. With implicitly-structured formats, ad hoc structure change processes must be set up, just as when the name changes.

Whether the structure is implicit or explicit, the most important part is that it should be based on a widely shared standard. A standard not only guarantees some degree of stability, but also easier integration of multiple providers. Imagine one wants to leverage different public transport data providers to compute the fastest connection between two locations. If all providers use the same standard, integrating an additional provider is a no-brainer. If every provider use a different access/format/standard combination, each integration becomes a specific project, and requires non-trivial development time.

Data correctness

If you happen to read articles about data science and data scientists, you might have stumbled upon the fact that they spend 80% of their time doing data curation, and only 20% of it doing "real" data science. Data engineering, whether Open or not, needs to face the same challenge. Every code line needs to strictly follow defensive programming principles, and no assumption can be made. The list of gotchas is probably close to infinite. In the demo, I faced the following:

- Timezone issue.

This is not a data correctness issue, but it’s closely related.

I introduced a bug myself by using a timezone difference i.e.

+1, instead of a named timezone i.e.Europe/Paris. Everything was working, until the local time changed to summer time. Then, I got strange results. - Real incorrect data.

One file contains time.

How would you interpret such time:

24:10:00, or even25:10:00? One needs to decide what to do:- Discard it?

- Interpret it as respectively

00:10:00and01:10:00the same day? - Interpret it as respectively

00:10:00and01:10:00the next day?It’s a tough decision. In the first case, possibly meaningful data is lost. In the two others, the wrong assumption might lead to dire consequences.

Conclusion

Data as been referred to as the new oil that powers the 21st century information systems. Open Data is free for anybody to make use of. There are some challenges that one needs to be aware of, but they can be overcome with some time and prior planning on how one would solve them in one’s context.