The subject of testing is vast. It may seem simple from outside, but it’s not. For example, one may define testing as checking that the software is fit for its purpose. But it encompasses a lot more: for example, mutation testing verifies that assertions do actually assert. In this post, I’d like to touch some testing flavors, what’s their purpose and how they compare to each other.

The need for testing

In an ideal world, we wouldn’t need testing. We would just write bug-free performant code that would be the perfect direct implementation of the requirements.

Yet, the process of transforming requirements into code needs to be checked. Software development involves requirements gathering, writing documents, communicating with people, coping with organizational issues, etc. For the sake of simplification, in the rest of this post, we will consider that this process is perfect - even though it’s not, and focus on code.

Let’s mention the elephant in the room: some platforms e.g. Coq do allow formal proof. This means it can be mathematically proven that the code is bug-free. However, most of those platforms stay within the bounds of academia. As far as I know, there aren’t that many attempts at using formal verification in the industry.

By removing formal proof from the equation (pun intended), the only option left is testing. But testing doesn’t prove the absence of bugs. In fact, testing is not only about bugs: it covers a lot of different areas. Let’s look at some of them.

Unit Testing

Unit Testing is a fairly well-documented discipline: regardless of the language, a ton of books have been published on the subject. They generally repeat the same things.

[…] Unit testing is a software testing method by which individual units of source code […] are tested to determine whether they are fit for use.

The only strongly-debated point is what constitutes a unit: in OOP, some argue it’s the class; others argue that it’s a module i.e. a set of collaborating classes.

Integration Testing

While Unit Testing is fairly well defined and understood, Integration Testing seems like virgin territory in comparison. I actually wrote the book Integration Testing from the Trenches because I found no other books on the subject at that time.

Integration testing […] is the phase in software testing in which individual software modules are combined and tested as a group.

One of the core concepts behind Integration Testing is the System Under Test. In Unit Testing, the SUT is the unit (i.e. the class or the module, as described above). In Integration Testing, the SUT needs to be defined per test: it can be as small as two units collaborating, and as big as the whole system.

There’s a raging debate about unit testing vs. integration testing. Some consider only the former, while other only the latter. If you want to know my personal stance on this, please read this previous post.

End-To-End Testing

At a time when most applications were Human-To-Machine, End-To-End Testing meant testing the flow from the User Interface to the database, and back again. Because web applications have become nearly ubiquitous, this involves the browser. For this reason, E2E Testing is very different from the previous approach. Automating user interactions with the browser is not trivial, even if the tools available improved with time.

The biggest issue in E2E Testing comes from the brittleness of the UI layer. Modern architectures cleanly separate into a JavaScript-based front-end and a REST back-end. To cope with the mentioned brittleness, it helps to first test the REST layer via Integration Testing.

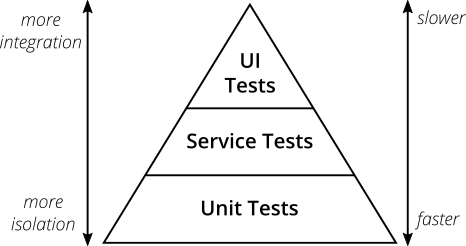

With E2E Testing, there’s a whole continuum from Unit Testing through Integration Testing. This is pretty well summarized in the famous Testing Pyramid.

One can consider E2E Testing as the most complete form of Integration Testing: in that case, the SUT is the whole application.

System Testing

With time passing, more and more applications are targeted at Machine-To-Machine interactions instead of H2M ones. End-To-End Testing is to H2M what System Testing is to M2M.

The benefit is that there’s no browser involved. Hence, the same automation techniques from Unit Testing and Integration Testing can be reused.

Performance Testing

Most of the previous approachs focus on testing Functional Requirements. It’s easy to forget that the fitness of a software component encompasses both Functional Requirements and Non-Functional Requirements. Performance counts as part of the NFR, along with reliability, etc.

Note that the SUT in performance tests can be the whole system, or just a sub-component deemed critical. Yet, in that case, asserting the performance of each individual components might not be enough to guarantee the performance of the whole system.

[…] performance testing is in general a testing practice performed to determine how a system performs in terms of responsiveness and stability under a particular workload.

From my experience, the term Performance Testing is overused, and much too general. It has many facets: here are the most commons ones.

Load Testing

Load Testing is in general what is implicitly meant when people talk about Performance Testing. In this context, one sets different parameters for the tests. Those parameters model a representative load from the production environment. In reality, most organizations are unable to provide a quality sample of the production load, and the load is inferred.

For example, in an e-commerce context, testing parameters would include:

- The different types of requests: browsing the catalog, viewing distinct products, adding them to the cart, checking out, paying, etc.

- The repartition between them e.g. 70% browsing, 20% viewing, 6% cart manipulation, 3% checkout, and 1% payment

- The number of concurrent requests

- The number of concurrent user sessions, and how are they spread between requests

- etc.

Endurance Testing

In regular Load Testing, tests run until they are finished executing. The goal is to check that the system behaves according to one’s expectations under a specific load.

In Endurance Testing also known as Soak Testing, the goal is to check how the system’s behavior evolves along with time. In comparison, the duration of those tests are more in the order of days, or even weeks.

Soak testing involves testing a system with a typical production load, over a continuous availability period, to validate system behavior under production use.

Stress Testing

Stress Testing is the testing of the system until it breaks. The load is increased gradually. The goal of Stress Testing is to understand the performance limits of the system.

Stress testing (sometimes called torture testing) is a form of deliberately intense or thorough testing used to determine the stability of a given system, critical infrastructure or entity. It involves testing beyond normal operational capacity, often to a breaking point, in order to observe the results.

Conclusions of such experiments are along the lines of:

within a load of x requests/second, a y fraction of requests return a 5xx error code.

Chaos Testing

Chaos Testing, also known as Chaos Engineering, is the practice of randomly removing components of a system to understand how it breaks, and check its overall resiliency under duress.

Chaos engineering is the discipline of experimenting on a software system in production in order to build confidence in the system’s capability to withstand turbulent and unexpected conditions.

The defining property of Chaos Testing is that it’s generally performed in the production environment. That doesn’t mean that experiments made in other environments cannot bring actionable feedback, far from the contrary. However, everybody has experienced issues in production that never happened in previous environments. The root cause might be slightly different architectures, different data data, higher load, etc: in the end, the only environment that needs to be battle-tested and bulletproof is production.

While counter-intuitive at first, the idea is now firmly entrenched in mature organizations that are serious about the resilience of their systems.

Security Testing

Just like Performance Testing is too broad a term, Security Testing covers many different areas. The most well-known form of Security Testing is Penetration Testing.

A penetration test […] is an authorized simulated cyberattack on a computer system, performed to evaluate the security of the system. \[…] The test is performed to identify both weaknesses […], including the potential for unauthorized parties to gain access to the system’s features and data, as well as strengths, enabling a full risk assessment to be completed.

Mutation Testing

Most forms of testing focus on the fitness of pieces of software, or the system as a whole. Mutation Testing is unique in that its goal is to give a hindsight on unit tests, and more specifically on the misleading Code Coverage metrics.

Mutation testing […] is used to design new software tests and evaluate the quality of existing software tests.

I’ve already written on the subject before. Please read it if you want to know more.

Exploratory Testing

A lot of testing approaches focus on regression bugs, bugs that appear in previously working software. For that, tests are automated: they are ran for every build. If a previously running test fails, the build fails as well.

The goal of Exploratory Testing is to catch bugs that are out of the testing harness’s reach. To achieve that, one interacts with the system in new and unexpected ways.

Exploratory testing is an approach to software testing that is concisely described as simultaneous learning, test design and test execution.

Because of its exploratory nature, this process is manual. My experience has shown me that Exploratory Testing is undervalued. That’s a pity, because I’ve witnessed testers who uncovered issues in the system that previous automated steps didn’t find.

Despite its manual nature, Exploratory Testing is not about clicking everywhere. It’s an engineering practice, that require discipline and a rigorous approach to documenting the steps that led to the issue. The best testers are also able:

- To isolate the issue

- To reproduce the steps that led to it

- To create the issue in a way that will ease the job of developpers who will need to fix the bug

User Acceptance Testing

Most forms of testing are performed by technical people: developers, automation testers, etc. On the opposite, Acceptance Testing is performed by non-technical people, e.g. business analysts or end users. As the name implies, the goal is to assess whether they accept the software as fit for their usage.

User acceptance testing consists of a process of verifying that a solution works for the user.

Summary

| Kind | Goal | Actor | Automated |

|---|---|---|---|

Assess the fitness of a code unit |

Developer |

||

Check the collaboration of units |

Developer |

||

Verify the behavior of the system as a whole |

Mix of different skillsets:

|

||

Validate the flow from the GUI to the datastore and back |

|

||

Check different performance-related metrics |

System administrator with specialized testing skills |

| |

|

Verify the resiliency of a system |

Mix of different skillsets:

|

||

Assess the vulnerability of a system |

"Hacker" |

||

Check the quality of tests |

Developer |

||

Find hidden bugs |

Manual tester |

||

Validate the system conforms to specifications |

Business people |

Conclusion

The post is already too long, and I only brushed the surface of available testing kinds. In conclusion, one need to remember a couple of things:

- It’s easier to find a bug in the system than to point to its root cause. The bigger the SUT, the harder it is.

- The right balance between manual testing and automated testing efforts needs to be found: this balance is context-dependent.

- Testing is all about ROI. This is plain risk management: the goal is to evaluate risks, their nature, their probability, their impact, the respective mitigation actions, etc. in regard to the associated costs.

- Finally, testing is a strategic move: one should carefully plan one’s testing efforts and the reasons behind them before writing a single one (and yes, it’s against TDD).

Happy testing!

To go further:

- Why are you testing your software?

- Starting with Cucumber for end-to-end testing

- Unit tests vs integration tests, why the opposition?

- Software performance testing

- Unit testing

- Integration testing

- Software testing

- Soak testing

- Stress testing

- Chaos engineering

- Security testing

- Penetration test

- Mutation testing

- Exploratory testing

- Acceptance testing

- Smoke testing